Natural Language Autoencoders

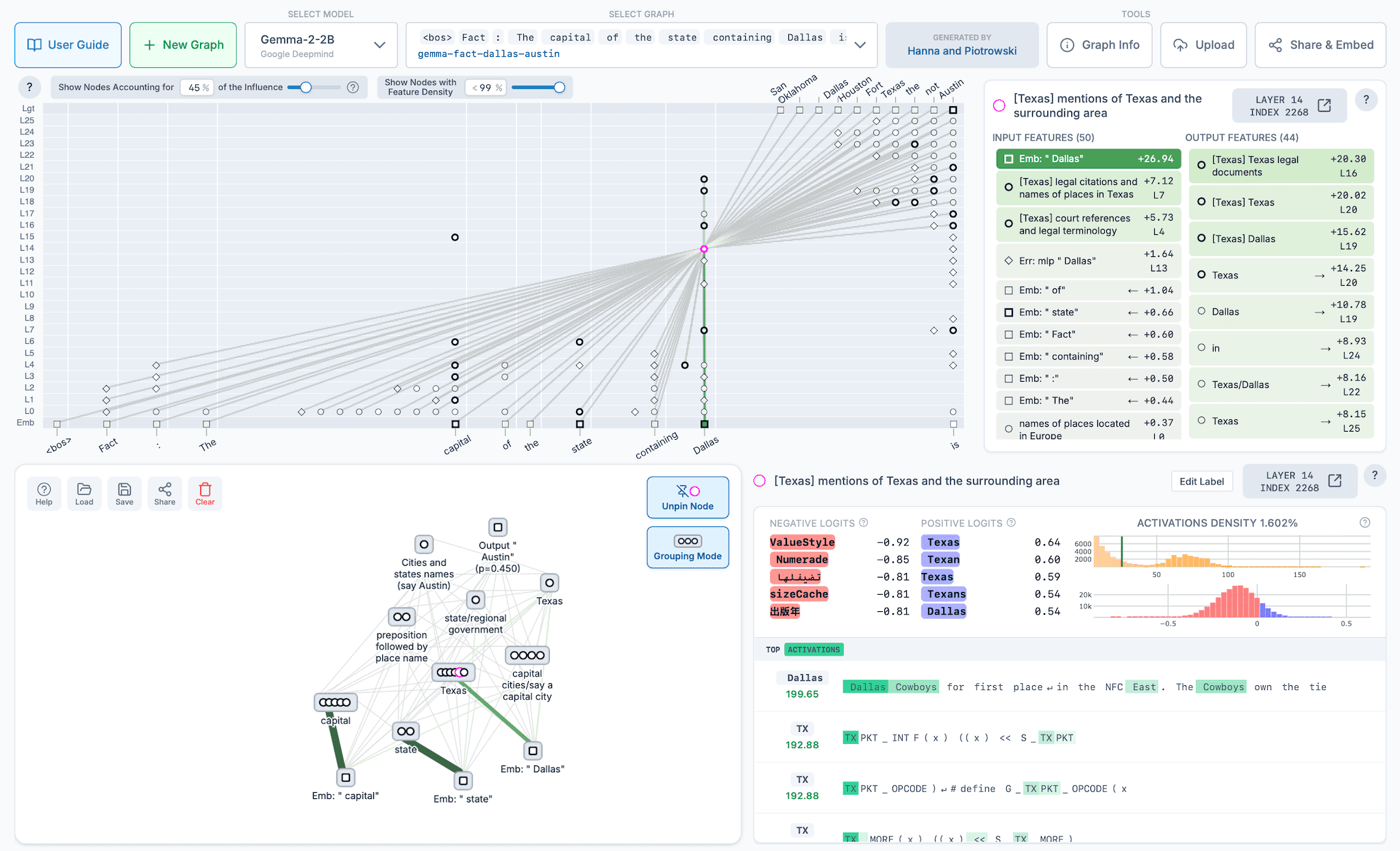

Translate a Model's Internal Thoughts Into Text

@misc{neuronpedia,

title = {Neuronpedia: Interactive Reference and Tooling for Analyzing Neural Networks},

year = {2023},

note = {Software available from neuronpedia.org},

url = {https://www.neuronpedia.org},

author = {Lin, Johnny}

}